Experience & Journey

Software Engineer

@ Techdojo

- Built AI agents with MCP servers to automate FinTech workflows, boosting efficiency by 80%. - Managed CI/CD pipelines with GitHub Actions and Docker for faster deployments. - Led React Native project development and performance optimization.

Software Engineer Intern

@ Techdojo

- Developed Virtual Assistant and VR Mall applications with immersive 3D environments. - Enhanced app performance by optimizing React and improving render efficiency (reduced load time by 40%). - Contributed to REST API integrations and state management using Redux Toolkit.

Project Portfolio

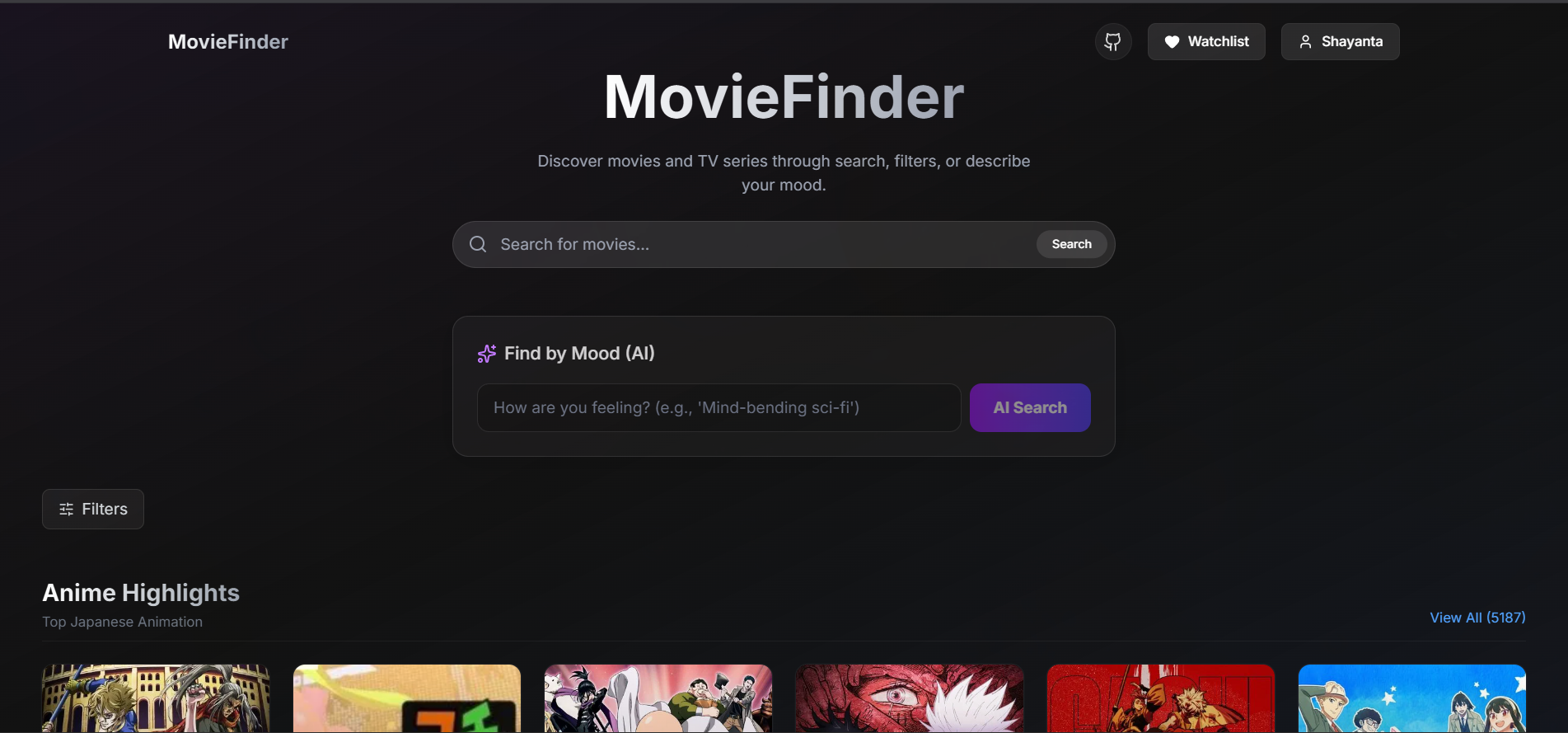

MovieFinder AI

- Developed a movie recommendation system that suggests movies from user provided descriptions using Next.js, LangChain, and a LLaMA model - It also gives you summarized output of similarity match, recommends movie/series based on plot - Ranks best seasons by scrapping other websites - Gives users recommendation that is anything watching worth it or not

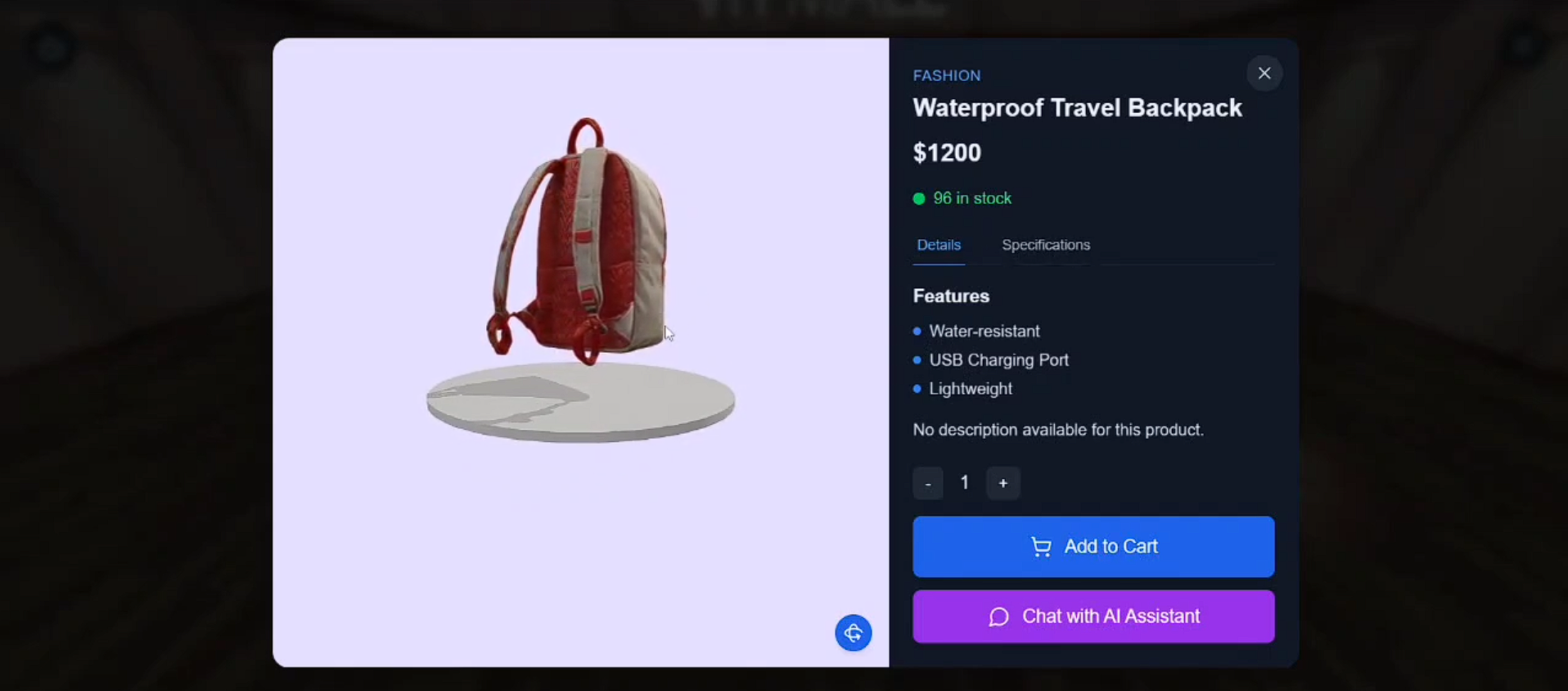

VR Mall: Immersive Commerce

VR Mall is an immersive virtual shopping platform that allows users to explore a 3D mall, interact with realistic product models, and make purchases in an engaging digital environment. Users can register, select shopping preferences, navigate using mouse and keyboard controls, and access detailed product information. An AI chatbot assists with inquiries, while a 3D shopping cart enables seamless checkout. This project enhances e-commerce by integrating interactive 3D elements, making shopping more intuitive and engaging.

Human Resource Management Extended

- With tree-like organogram accompanied by many compact features, we intend to implement the leave and attendance system in such a way that an employee or admin will need minimum training or supervision to work on the product. - An upper management will have a dashboard with many feature aggregation, so top management can get a high level view of the total attendance/leave scenario and make/optimize HR principles, so that companies/organizations can get maximum value from Human Resources.

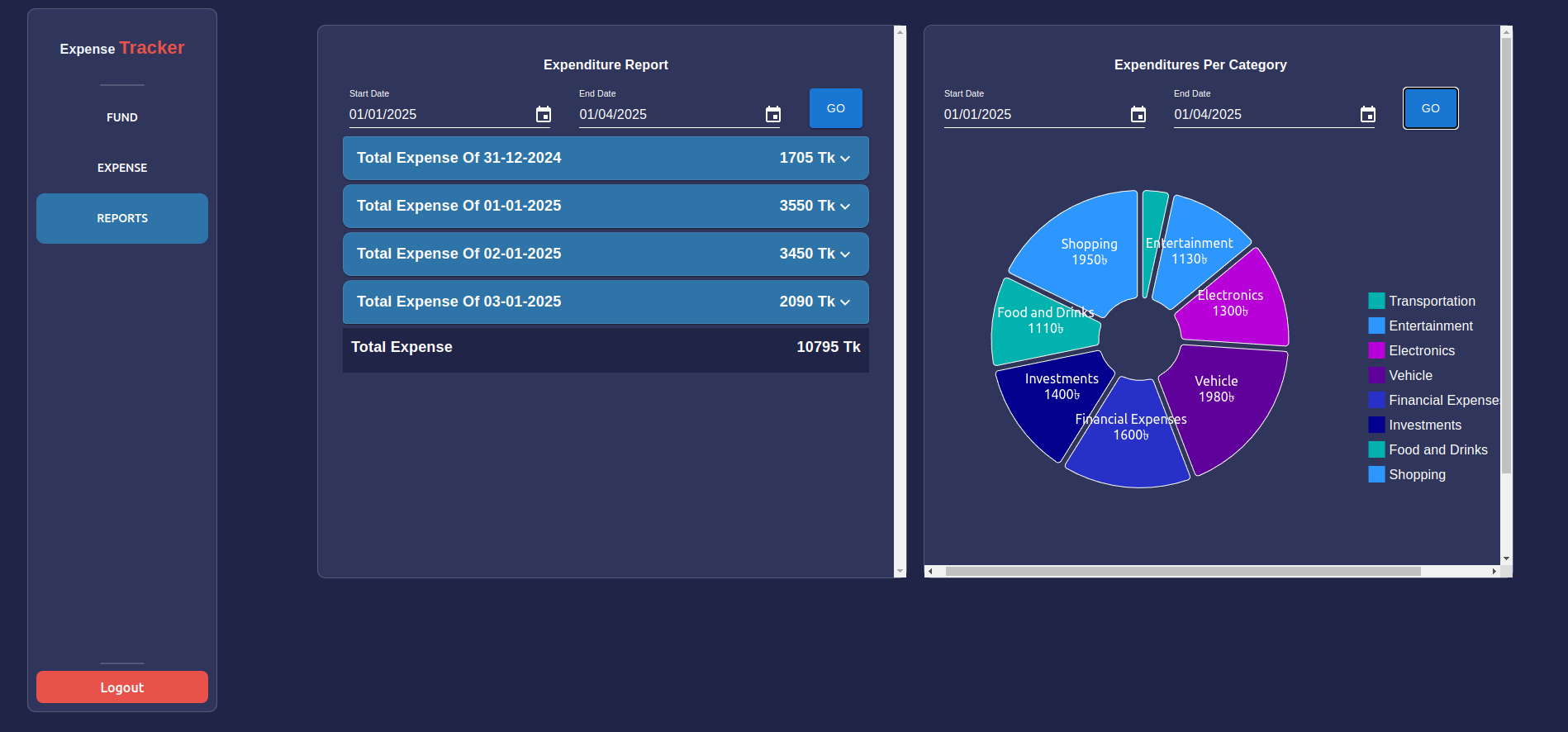

Expense Tracker App

- Built a responsive expense tracker in React.js with real-time expense visualization and analytics. - Implemented a JavaScript-based predictive model to forecast upcoming expenses.

Core Competencies

Broader, deeper capabilities combining skills, knowledge, and behaviors to solve complex problems.

AI-Driven Recommendation Systems

Designs and implements intelligent recommendation engines that interpret natural language inputs, leverage large language models, and integrate multimodal data to deliver personalized content suggestions.

Immersive 3D Commerce Engineering

Builds interactive virtual environments using WebXR and three.js, coupling real‑time 3D rendering with AI assistants to create engaging, user‑centric e‑commerce experiences.

Full‑Stack Data‑Centric Application Architecture

Creates end‑to‑end web applications that combine responsive front‑ends, robust back‑ends, and data pipelines for analytics, forecasting, and real‑time visualizations.

Strategic HR Tech Solutions

Develops scalable human‑resource platforms with hierarchical visualizations, automated attendance/leave workflows, and executive dashboards for data‑driven decision making.

Autonomous Agentic Workflow Research

Conducts scholarly research on autonomous agent architectures, focusing on efficiency metrics and integration of LLMs into complex workflow pipelines.

Technical Arsenal

The languages, frameworks, and tools that power my production environments.

Programming Languages

JavaScript

ExpertPython

ExpertFrameworks & Libraries

Next.js

AdvancedReact

ExpertBackend & Runtimes

Node.js

ExpertTools & Databases

Vector Labs

AdvancedResearch & Publications

A timeline of contributions to distributed intelligence and agentic systems.

Human trajectory imputation model: A hybrid deep learning approach for pedestrian trajectory imputation

2025Pedestrian trajectories are crucial for self-driving cars to plan their paths effectively. The sensors implanted in these self-driving vehicles, despite being state-of-the-art ones, often face inaccuracies in the perception of surrounding environments due to technical challenges in adverse weather conditions, interference from other vehicles’ sensors and electronic devices, and signal reception failure, leading to incompleteness in the trajectory data. But for real-time decision making for autonomous driving, trajectory imputation is no less crucial. Previous attempts to address this issue, such as statistical inference and machine learning approaches, have shown promise. Yet, the landscape of deep learning is rapidly evolving, with new and more robust models emerging. In this research, we have proposed an encoder–decoder architecture, the Human Trajectory Imputation Model, coined HTIM, to tackle these challenges. This architecture aims to fill in the missing parts of pedestrian trajectories. The model is evaluated using the Intersection drone the inD dataset, containing trajectory data at suitable altitudes, preserving naturalistic pedestrian behavior with varied dataset sizes. To assess the effectiveness of our model, we utilize L1, MSE, and quantile and ADE loss. Our experiments demonstrate that HTIM outperforms the majority of the state-of-the-art methods in this field, thus indicating its superior performance in imputing pedestrian trajectories.

HTIM: A Hybrid Deep Learning Approach For Pedestrian Trajectory Imputation

2024Pedestrian trajectories are crucial for self-driving cars to plan their paths effectively. The sensors implanted in these self-driving vehicles, despite being state-of-the-art ones, often face inaccuracies in the perception of surrounding environments due to technical challenges for adverse weather conditions, interference from other vehicles sensors and electronic devices, and signal reception failure, leading to incompleteness in trajectory data. But for real-time decision making for autonomous driving, the trajectory imputation is no less crucial. Previous attempts to address this issue such as statistical inference and machine learning approaches have shown promise. Yet, the landscape of deep learning is rapidly evolving with new and more robust models emerging. In this research, we have proposed an encoder-decoder architecture, Human Trajectory Imputation Model, coined as HTIM, to tackle these challenges. This architecture aims to fill in the missing parts of pedestrian trajectories. The model has been evaluated using the Intersection drone inD dataset, containing trajectory data at suitable altitudes preserving naturalistic pedestrian behavior with varied dataset sizes. To assess the effectiveness of our model, we have utilized L1, MSE, Quantile and ADE loss. Our experiments have demonstrated that HTIM outperforms the majority of the state-of-the-art methods in this field, thus indicating its superior performance in imputing pedestrian trajectories.

PTIN: Enriching Pedestrian Safety with an LSTM-GRU-Transformer Based Trajectory Imputation System for Autonomous Vehicles

2023Human Trajectory Imputation has been used for decades in surveillance, sports analytics, delivery and logistics, traffic management, etc. Recently, autonomous vehicles(AV) have entered into this realm. These vehicles have come to the transportation scenario for their safety, efficiency and convenience. These vehicles, filled with advanced sensors; analyze and navigate critical environments. GPS-based trajectory data, among these sensors, plays a vital role in motion planning. However, challenges such as signal loss due to obstructions, electromagnetic noise and technical constraints create in missing or erroneous trajectory points. Drones, positioned higher in the sky, offer advantages in signal reception and obstacle avoidance. That is why we are using inD dataset. The techniques addressed here tend to ensure reliable, real-time and accurate autonomous vehicle operation. With the stringent real-time demands …

Clustering as a Catalyst for Big Data Classification (CC-BC)

2023In supervised learning, data classification is the method of categorizing data to facilitate data mining processes for informed decision-making. The central aim of a classification model is to accurately predict the categorical data for both familiar and unfamiliar instances. The classification models in machine learning are usually trained with datasets where instances are labeled. This paper explores an alternative way of constructing classification models based on the similarities of the instances rather than labels annotated by experts. The process of labeling data is a resource-intensive and time consuming process incredibly challenging when dealing with large datasets known as big data. In light of the proposed methodology clusters, the big data developing classifiers based on these clusters while bypassing the predefined class labels. This approach enhanced the performance of the classifier. Moreover, the …

Let's build the

future together.

Let's do solve something for dopamine!